While the innovation for creating man-made reasoning fueled chatbots has existed for quite a while, another perspective piece in JAMA spreads out the clinical, moral, and legitimate viewpoints that must be considered before applying them in social insurance. And keeping in mind that the development of COVID-19 and the social removing that goes with it has provoked more wellbeing frameworks to investigate and apply computerized chatbots, the creators despite everything urge alert and care before continuing.

“We need to recognize that this is relatively new technology and even for the older systems that were in place, the data are limited,” said the viewpoint’s lead author, John D. McGreevey III, MD, an associate professor of Medicine in the Perelman School of Medicine at the University of Pennsylvania. “Any efforts also need to realize that much of the data we have comes from research, not widespread clinical implementation. Knowing that, evaluation of these systems must be robust when they enter the clinical space, and those operating them should be nimble enough to adapt quickly to feedback.”

McGreevey, joined by C. William Hanson III, MD, boss clinical data official at Penn Medicine, and Ross Koppel, PhD, FACMI, a senior individual at the Leonard Davis Institute of Healthcare Economics at Penn and teacher of Medical Informatics, expressed “Clinical, Legal, and Ethical Aspects of AI-Assisted Conversational Agents.” In it, the creators spread out 12 distinctive center zones that ought to be viewed as when intending to actualize a chatbot, or, all the more officially, “conversational operator,” in clinical consideration.

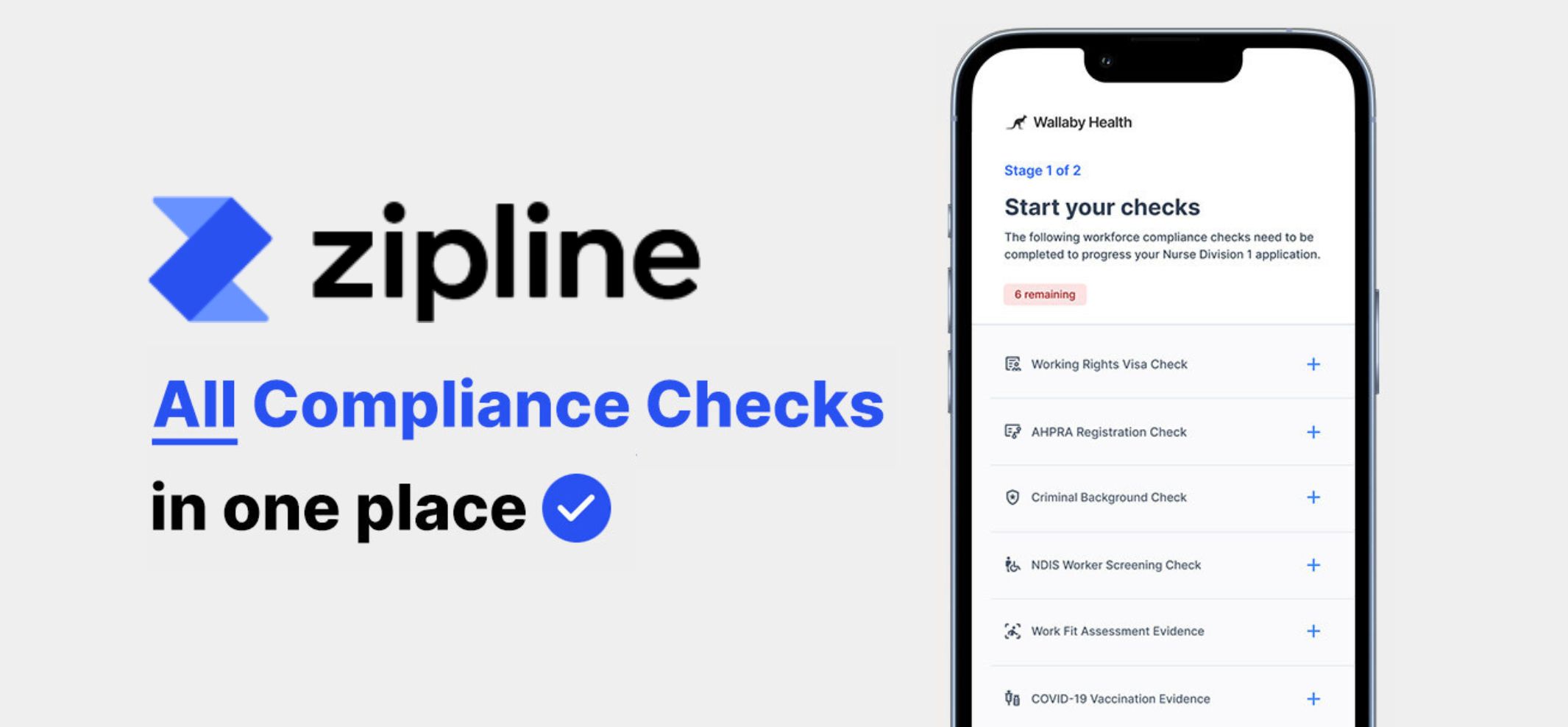

Chatbots are a device used to speak with patients through instant message or voice. Numerous chatbots are fueled by computerized reasoning (AI). This paper explicitly talks about chatbots that utilization regular language preparing, an AI procedure that looks to “comprehend” language utilized in discussions and draws strings and associations from them to give important and valuable answers.

With human services, those messages, and individuals’ responses to them, are critical and convey unmistakable outcomes.

“We are increasingly in direct communication with our patients through electronic medical records, giving them direct access to their test results, diagnoses and doctors’ notes,” Hanson said. “Chatbots have the ability to enhance the value of those communications on the one hand, or cause confusion or even harm, on the other.”

For instance, how a chatbot handles someone telling it something as serious as “I want to hurt myself” has many different implications.

In oneself damage model, there are a few regions of center spread out by the writers that apply. This contacts as a matter of first importance on the “Quiet Safety” classification: Who screens the chatbot and how regularly do they do it? It likewise addresses “Trust and Transparency”: Would this patient really take a reaction from a known chatbot genuinely? It likewise, lamentably, brings up issues in the paper’s “Legitimate and Licensing” class: Who is responsible if the chatbot comes up short in its errand. Also, an inquiry under the “Extension” classification may apply here, as well: Is this an undertaking most appropriate for a chatbot, or is it something that should at present be absolutely human-worked?

Inside their perspective, the group accepts they have spread out key contemplations that can advise a system for dynamic with regards to actualizing chatbots in medicinal services. Their contemplations ought to apply in any event, when fast usage is required to react to occasions like the spread of COVID-19.

“To what extent should chatbots be extending the capabilities of clinicians, which we’d call augmented intelligence, or replacing them through totally artificial intelligence?” Koppel said. “Likewise, we need to determine the limits of chatbot authority to perform in different clinical scenarios, such as when a patient indicates that they have a cough, should the chatbot only respond by letting a nurse know or digging in further: ‘Can you tell me more about your cough?'”

Chatbots have the chance to altogether improve wellbeing results and lower wellbeing frameworks’ working expenses, however assessment and examination will be critical to that: both to guarantee smooth activity and to keep the trust of the two patients and medicinal services laborers.

“It’s our belief that the work is not done when the conversational agent is deployed,” McGreevey said. “These are going to be increasingly impactful technologies that deserve to be monitored not just before they are launched, but continuously throughout the life cycle of their work with patients.”

A version of this article was originally published on:

https://www.sciencedaily.com/releases/2020/07/200724120154.htm